|

These work to form an expression and define the particular order in which tokens must be placed.

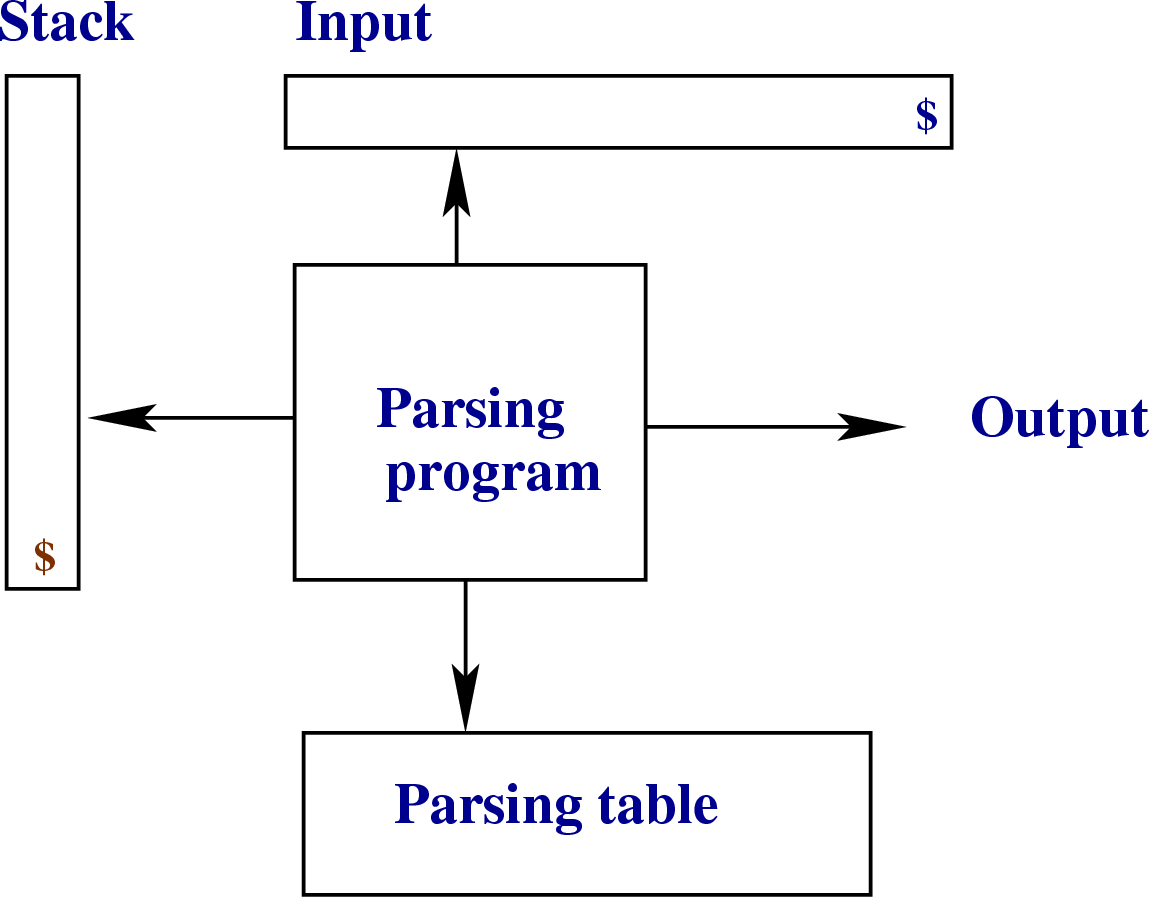

This makes use of a context-free grammar that defines algorithmic procedures for components. Syntactic Analysis: Checks whether the generated tokens form a meaningful expression. A token is the smallest unit in a programming language that possesses some meaning (such as +, -, *, “function”, or “new” in JavaScript). Lexical Analysis: A lexical analyzer is used to produce tokens from a stream of input string characters, which are broken into small components to form meaningful expressions. The overall process of parsing involves three stages: To do so, it follows a set of defined rules called “grammar”. The parser is commonly used as a component of the translator that organizes linear text in a structure that can be easily manipulated (parse tree). This task is usually performed by a translator (interpreter or compiler). In order for the code written in human-readable form to be understood by a machine, it must be converted into machine language.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed